Inside Cobalt

A tour of the architecture, deploy flow, and tests behind our deployment platform.

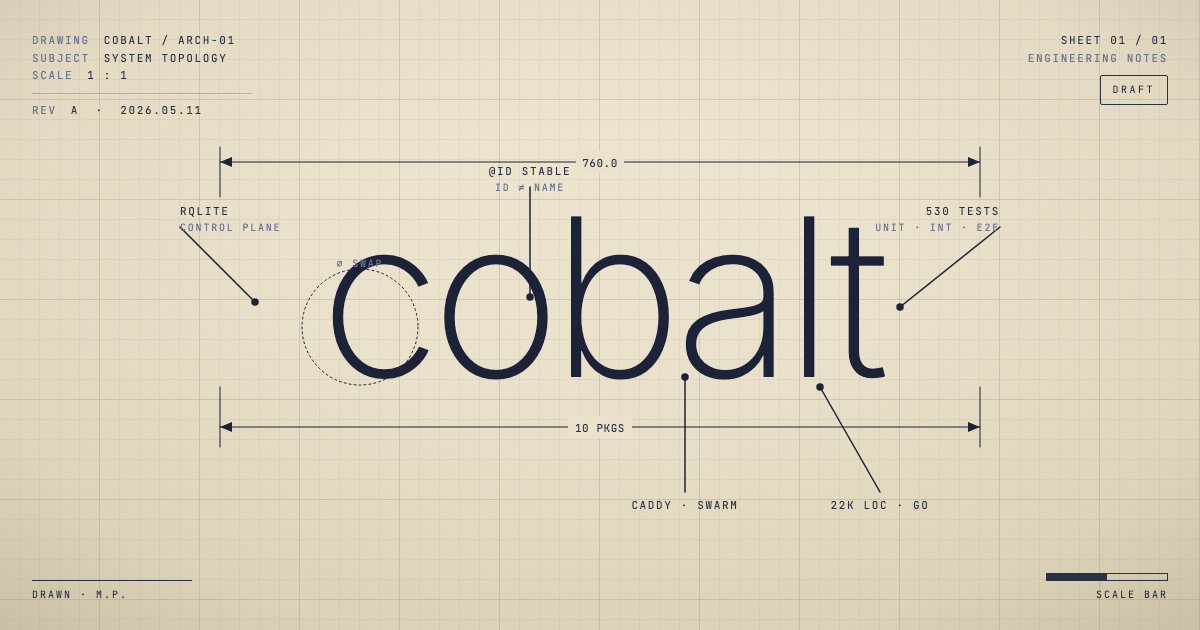

Cobalt is the open-source deployment platform now running Blue. It’s one Go binary — about 22,000 lines of production code, ~16,000 lines of tests, 530 test functions across ten subsystems. It builds your repo into a Docker image, runs it under Docker Swarm, routes traffic through Caddy with automatic HTTPS, stores its state in rqlite, and exposes the whole thing through an HTTP API and a CLI that are the same binary.

This post is a tour. How the code is organized, what happens when you run cobalt deploy, and what we built around the deploy path to keep it honest. It’s the deep-dive companion to Why We Built Cobalt — the engineering view of the same system.

The shape of the binary

Every subsystem lives under internal/server/ as its own package. Cross-package boundaries are interfaces; nothing reaches inside another package’s internals. The list:

internal/server/

├── store — rqlite-backed control plane

├── caddy — admin API client

├── docker — Swarm wrapper

├── cobaltfile — cobalt.json parser

├── github — App JWT + webhooks

├── deploy — orchestrator + state machine

├── worker — scheduled background jobs

├── encryption — AES-GCM for env vars

├── middleware — auth, logging, panic recovery

└── api — HTTP endpointsThat’s the whole topology. Ten packages, all named after what they do, all small enough that a single engineer can read one end-to-end in an hour. The deploy orchestrator is the only place that touches multiple subsystems — store, caddy, docker, cobaltfile — and even there it talks through interfaces, not concrete types.

The single-binary discipline isn’t decorative. It’s what lets the CLI and the daemon share the same code paths: cobalt deploy (CLI) and POST /api/projects/{id}/deploy (daemon HTTP) both end up calling into the same deploy package. Bugs found by the CLI are fixed in one place. Tests written against the orchestrator cover both surfaces.

The store: rqlite as a control plane

State lives in rqlite, which is SQLite over Raft. We chose it over Postgres for two reasons. First, it ships embedded — a fresh Cobalt server doesn’t need a separate database to bring up. Second, when we go multi-node, the Raft replication is already there; the data layer doesn’t need a migration.

The schema is plain SQL files run by an embedded migration runner:

internal/server/store/migrations/

├── 0001_init.sql

├── 0002_apikeys.sql

├── 0003_github_apps.sql

├── ...Tables are exactly what you’d expect: projects, deployments, env_vars, domains, apikeys, github_apps, github_app_installations, github_app_repos. Every row that anchors infrastructure carries a stable int64 ID. Display names are just metadata — Docker labels, Caddy @ids, and internal lookups are all keyed by the ID.

Env vars are encrypted at rest with AES-GCM. The key lives on disk under --data-dir. The encryption package owns the AEAD construction; nothing else gets to touch raw ciphertext.

The cobaltfile: config as the contract

Every project has a cobalt.json in its repo. The cobaltfile package parses it once, into a typed Cobaltfile struct, and that’s what every other layer of the system reasons about. The parser is strict — unknown fields are rejected, not silently dropped. A typo in your config fails at parse time, not three layers deep into Docker.

{

"services": {

"web": {

"type": "container",

"port": 3000,

"build": { "dockerfile": "Dockerfile" }

},

"hourly-rollup": {

"type": "cron",

"schedule": "0 * * * *",

"command": ["node", "scripts/rollup.js"]

}

}

}Services come in five types — container, cron, command, static, generator. Hooks are services too: hook:deploy:start:before and hook:deploy:start:after are validated as type=command with a non-empty argv. The parser has 32 unit tests covering defaults, every service type, hook validation, every known error path, plus the actual cobaltfile shapes from Blue’s API and frontend so a refactor can’t quietly break our own configs.

This sounds boring. It is the contract every other subsystem depends on, and getting it right at the boundary means none of the downstream packages need defensive parsing.

Anatomy of a deploy

When you push to a Cobalt-managed repo, GitHub fires a webhook. The signature is verified against the App’s webhook secret using hmac.Equal — constant-time, no shortcuts. A push event on the project’s tracked branch enqueues a new row in deployments.

The dispatcher is a worker pool that reads from the queue. When it picks up a row, it transitions the deployment from queued → fetching and hands it to the orchestrator’s Run:

// internal/server/deploy/orchestrator.go

func (o *Orchestrator) Run(ctx context.Context, dep store.Deployment) (err error) {

project, err := o.getProject(ctx, dep.ProjectID)

// ... open per-deploy log file ...

envVars, err := o.DB.EnvVarMap(ctx, project.ID)

if err != nil {

return fmt.Errorf("deploy: env vars: %w", err)

}

// PHASE 1 — prepare. Reversible, no Caddy touch.

ws, err := o.Preparer.Prepare(ctx, *project, dep)

if err != nil {

return fmt.Errorf("deploy: prepare: %w", err)

}

// ... persist resolved cobaltfile to rqlite ...

// Preflight: a `web` service needs at least one domain attached.

if _, hasWeb := ws.Cobaltfile.Services["web"]; hasWeb {

domains, _ := o.DB.ListDomainsForProject(ctx, project.ID)

if len(domains) == 0 {

return fmt.Errorf("deploy: project %q has a web service but no domains attached", project.Name)

}

}

o.DB.SetDeploymentStatus(ctx, dep.ID, cobaltapi.StateBuilding)

built, err := o.Builder.Build(ctx, *project, dep, ws, out)

if err != nil {

return fmt.Errorf("deploy: build: %w", err)

}

return o.cutover(ctx, log, project, dep, built, ws.Cobaltfile, envVars, out)

}Phase 1 is everything that can be undone by not committing — clone the repo, parse the cobaltfile, persist it to rqlite so the background reconciler has authoritative desired state, preflight the domains, build the Docker image. If anything fails here, no public-facing state has been touched. The deployment row goes to failed and traffic still flows to the previous version.

Phase 2 is the cutover:

func (o *Orchestrator) cutover(...) error {

// Ensure networks, run generators + before-hook

// ...

o.DB.SetDeploymentStatus(ctx, dep.ID, cobaltapi.StateSwapping)

startedServices, err := startServicesPhase(ctx, o.Docker, *project, dep, built, envVars, deploymentNetwork, out)

if err != nil {

_ = stopServices(context.Background(), o.Docker, startedServices)

return err

}

if err := waitHealthyAll(ctx, o.Docker, *project, dep, built, out); err != nil {

_ = stopServices(context.Background(), o.Docker, startedServices)

return err

}

// Swarm says the task is running, but "running" only means the

// process started — the app may still be opening its socket,

// loading config, warming a cache, etc. Probe the web service's

// port from inside cobalt-caddy before we route traffic; otherwise

// we'll cut over to a backend that 502s.

if err := waitHTTPReady(ctx, o.Docker, *project, dep, cf, out); err != nil {

_ = stopServices(context.Background(), o.Docker, startedServices)

return err

}

// Atomic from the public's POV.

if err := commitCaddySwap(ctx, o.Caddy, o.DB, *project, dep, cf); err != nil {

revertCaddySwap(context.Background(), log, o.Caddy, o.DB, *project, cf)

_ = stopServices(context.Background(), o.Docker, startedServices)

return fmt.Errorf("deploy: commit caddy: %w", err)

}

// ... after-hook, cleanup ...

}The shape that matters: Swarm-level health (waitHealthyAll) is not enough, because Swarm considers a task healthy the moment its process is running. The new container can be running and still 502 every request because the app hasn’t opened its socket yet. So we add waitHTTPReady — an actual TCP/HTTP probe from inside the Caddy container against the new upstream — before we route a single byte of public traffic to it.

When the Caddy swap succeeds, the cutover is atomic from the public’s perspective. When it fails, we revert to the last known-good upstream and tear down the new services so they don’t linger.

Why two phases

The two-phase split — reversible before commit, atomic at commit — is the most important shape in the orchestrator. Phase 1 can take 90 seconds (clone + build + push). If anything in Phase 1 fails, the running service never knew there was a deploy in flight. Phase 2 is seconds and is structured so the only public-facing mutation — the Caddy PATCH — is the last thing that happens, after every readiness probe has passed.

The deploy state machine reflects the same split: Fetching → Building → Swapping → terminal. The first two are Phase 1; Swapping is the moment we cross the line, after which a failure has to trigger an explicit revert. Both phases are exhaustively covered by tests, but the gravity of failure is different on either side of the boundary, and the orchestrator’s code reads like a flowchart you could trace with a finger.

The test pyramid

Cobalt has 530 test functions across the ten subsystems. The breakdown by package:

| Package | Test funcs | What’s covered |

|---|---|---|

| api | 83 | Every HTTP endpoint, every error path, auth, content-type negotiation |

| deploy | 66 | Orchestrator phases, prepare, build, cutover, rollback, queue, dispatcher |

| docker | 56 | Argv shapes, label injection, deterministic env/secret ordering, missing-resource idempotency |

| worker | 51 | Caddy reconciler, image cleanup, network cleanup, cron, scheduler, log rotation |

| cobaltfile | 32 | Schema validation, every service type, every error path, real Blue configs |

| store | 28 | CRUD per resource, migrations, AES-GCM env encryption |

| github | 25 | App JWT, webhook HMAC verify, push/install event parsing, repo pagination |

| caddy | 22 | Wire shapes, route lifecycle, error mapping, PATCH-then-verify, init config |

| encryption | 12 | AEAD construction, key handling |

That’s the base of the pyramid: ~530 fast tests, every one runs in milliseconds, all driven by fakes that satisfy the same Go interfaces the real subsystems implement.

The interface discipline is the thing that makes this work. Every subsystem the orchestrator depends on is reached through a small interface. The Caddy dependency, for example, is exactly this surface:

// internal/server/deploy/swap.go

type SwapCaddy interface {

SetDomainsForProject(ctx context.Context, projectID int64, domains []string) error

VerifyServeService(ctx context.Context, projectID int64, container string, port int) error

ServeStaticSite(ctx context.Context, projectID int64, projectName string, deploymentNumber int) error

ServeService(ctx context.Context, projectID int64, container string, port int) error

}Production wiring satisfies it with *caddy.Client. Tests satisfy it with a fake that records what was called, returns canned responses, and asserts on the call pattern. Same for SwapStore, the Docker runner, the Preparer, the Builder, the CronReconciler. Every concrete dependency in the orchestrator is an interface in disguise, which means the orchestrator’s tests don’t depend on running Docker or Caddy at all.

Above the unit layer sit two integration tests — gated behind the integration build tag, they spin up real Docker Swarm, real Caddy, and a real Cobalt daemon in containers. The tests so far are:

// Chaos suite: tests that Cobalt does the right thing when its

// dependencies misbehave.

TestChaos_CaddyReturns500_RetriesWithBackoff

TestChaos_ConcurrentDeployAndReconcile

TestChaos_Reconciler_SkipsInFlightProjects

// Cutover suite: tests the deploy-time rollback path against real

// containers.

TestCutover_HealthcheckFails_RollsBackCleanlyEach chaos test spins up Swarm, Caddy, and the daemon in a fresh cobalt-chaos-{randomID} network, drives a real deploy, and injects a specific failure — Caddy returning 500s, a deploy racing the reconciler, a project being in StateSwapping when the reconciler sweeps. The daemon’s correctness is tested against real dependencies, not against fakes.

Above the integration layer sit end-to-end tests: a separate e2e/ directory that drives the daemon’s HTTP API against a real running stack and asserts on real network behavior. There are six scenarios today:

TestPrimaryDeploy // happy path, end-to-end

TestApexWithWWW // www.example.com → example.com redirect routing

TestRedirectTo // arbitrary domain → other domain

TestRemoveCascadesRedirects // removing the source kills the redirect

TestSlowStartup // app that takes 8s to open its socket

TestCrashLoop // app that crashes on boot — does Cobalt notice?The pyramid in totals: ~530 unit tests, four integration tests across two suites, six e2e scenarios. The tests-to-production ratio is ~0.72 LOC of test for every LOC of production code (15,697 / 21,946). That ratio is the spine of the project’s confidence: a typical change touches five or six files and ships with a dozen new test cases.

What this gets us

The cycle from “edit code” to “know it works” is roughly: go test ./... runs in seconds, the integration suite runs in a minute, the e2e suite runs in a few. A bug surfaced in production gets a regression test the same day. A new feature gets unit tests against fakes, then an integration test against real containers, then occasionally an e2e scenario if it changes public behavior.

The flip side of that ratio is that everything in the production tree is testable by construction. Subsystems that need to be tested in isolation expose interfaces. Subsystems that need their wire behavior tested expose plain HTTP / Caddy admin / Docker daemon surfaces that integration tests drive directly. Nothing in the codebase is a black box from inside Cobalt’s perspective.

The lesson, if there is one: a small team building serious infrastructure doesn’t escape the discipline that bigger teams build. We just package it into a single binary, a small number of packages, and an interface-and-fakes pattern that scales naturally with the codebase.

Cobalt’s job is to be boring. Twenty-two thousand lines of Go, sixteen thousand lines of tests, and the only thing the operator should ever notice is that deploys keep working.

— Manny